YouTube Says It Removed 5 Times The Number of Harmful Videos In Q2

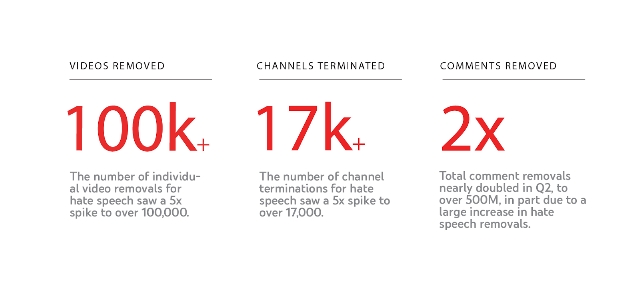

YouTube has ramped up its efforts to remove harmful content over the last quarter, as a new report shows the company removing over 100,000 individual videos.

That is nearly 5 times the number of videos removed in the first quarter of the year, reflecting a big shift in activity following a new hate speech policy introduced in June.

Additionally, the company says it has removed over 17,000 channels and 500 million comments in Q2.

Notably, YouTube says a large amount of the harmful content is flagged using machine learning technology to remove the content before it is ever seen by actual users. According to the company’s data, more than 87% of the videos removed in Q2 were first flagged by YouTube’s automatic systems.

The report also mentions that an update to YouTube’s spam detection tools has driven a 50% increase in the number of channels removed for violating the platform’s spam guidelines.

YouTube says the report is only the first in a four-part series which will cover the company’s guiding principles:

- Remove content that violates policies

- Raise up authoritative voices

- Reward eligible creators

- Reduce the spread of borderline content

As such, you can expect to see more details about how YouTube is working to curate the best platform possible in the near future.

Leave a Reply

Want to join the discussion?Feel free to contribute!