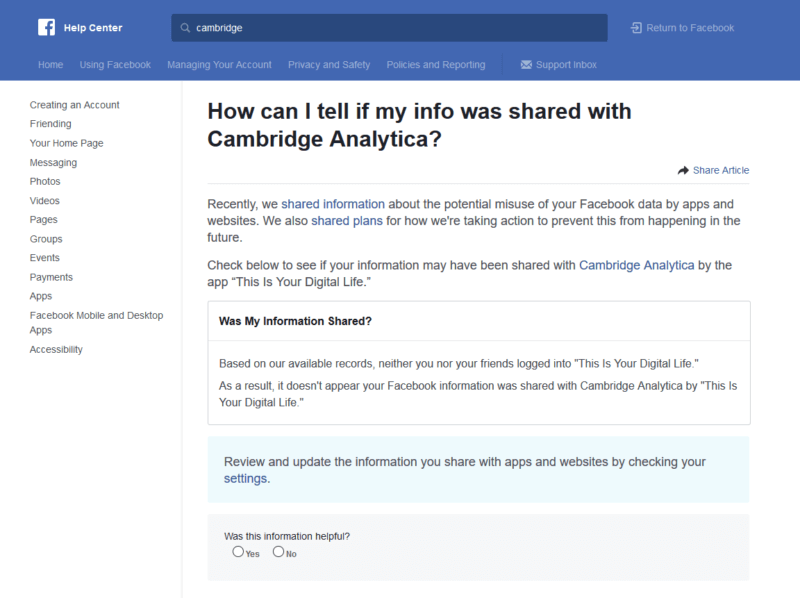

Days before Facebook CEO Mark Zuckerberg is set to testify to Congress about the social network’s role in allowing Cambridge Analytica to exploit user data, Facebook is working to make it easy to see if your information was shared with the scandal-plagued analytics firm.

Facebook has published a new section within its help center called “How can I tell if my info was shared with Cambridge Analytica.” You can also quickly find the page by simply searching “Cambridge or Cambridge Analytica” in the Facebook search bar.

If you’re logged into your Facebook account, this page will automatically inform you whether your data was potentially breached by the “This is your digital life” app.

Since information has come to light about how Cambridge Analytica has been potentially misusing user data, the company’s relationship with Facebook has come under scrutiny. In response, the social network has taken several steps to attempt to re-win the public’s trust – such as launching this latest page. It has also introduced a data abuse bounty program that allows users to report app developers that may be misusing data.

Questions will likely remain long after Mark Zuckerberg’s testimony tomorrow, but at least you can now personally check to see whether your personal account details are safe or have been exploited.