Source: Jason Tamez

Does Google control the internet? Of course no one has control over the entire existance of the internet, but the major search engine has a huge influence in how we browse the web. So, it is interesting to hear a Google representative entirely downplay their role in managing the content online.

Barry Schwartz noticed the statement in a Google Webmaster Help forums thread about removing content from showing up in Google. It’s a fairly common question, but the response had some particularly interesting information. According to Eric Kuan from Google, the search engine doesn’t play a part in controlling content on the internet.

His statement reads:

Google doesn’t control the contents of the web, so before you submit a URL removal request, the content on the page has to be removed. There are some exceptions that pertain to personal information that could cause harm. You can find more information about those exceptions here: https://support.google.com/websearch/answer/2744324.

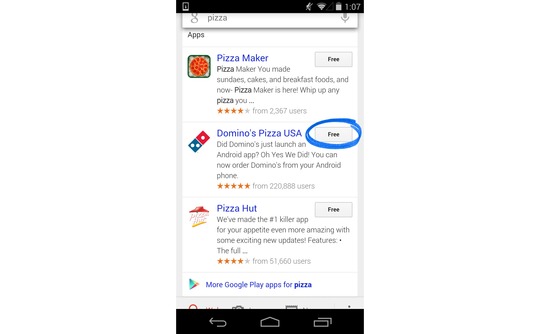

Now, what Kuan said is technically true. Google doesn’t have any control over what is published to the internet. But, Google is the largest gateway to all that content, and plays a role in two-thirds of searches.

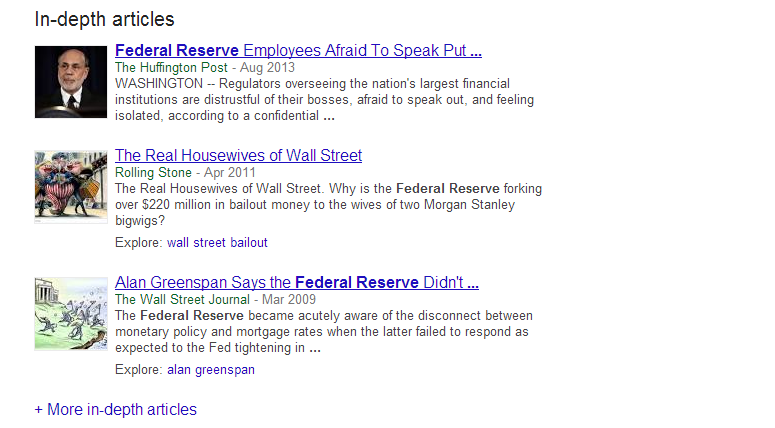

This raises some notable questions for website owners and searchers alike. We rarely consider how much of an influence Google has in deciding what information we absorb, but they hold some very important keys to areas of the web we otherwise wouldn’t find.

As a publisher, you are obliged to follow Google’s guidelines in order to be made visible to the huge wealth of searchers. It is an agreement which often toes uncomfortable lines as the search engine has grown into a massive corporation encompassing many aspects of our lives and future technology.

When you begin marketing and optimizing your site online to become more visible, you should keep this agreement in mind. A lot of people think of Google as a system to take advantage of in order to reach a larger audience. While you can attempt to do that, you are breaking the agreement with the search engine and they can penalize your efforts at any time.

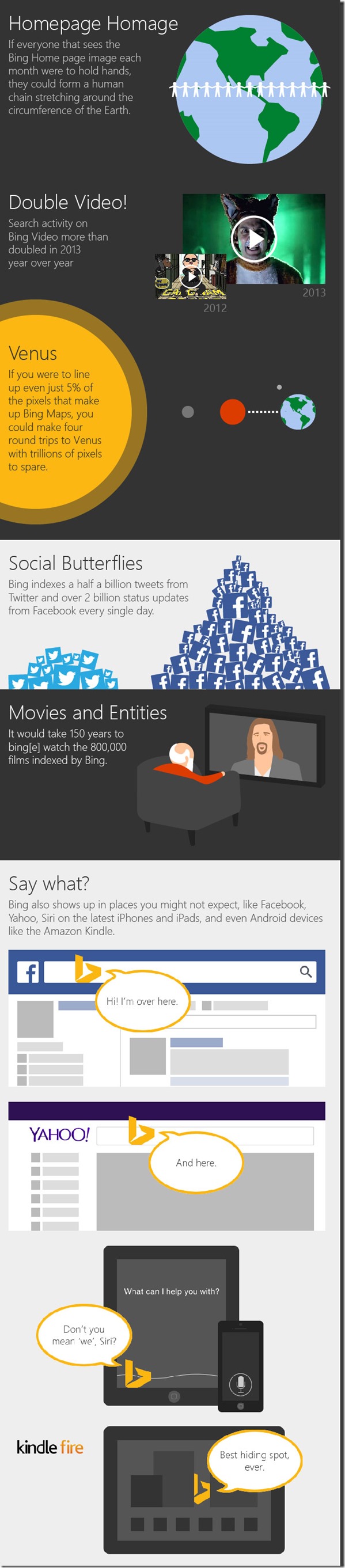

For one, you have probably heard how important social media is to establishing a brand online and engaging internet users, but you might not know that Bing is often more attentive to social media than Google. While Google’s rankings may factor in social media data for website owners, actual users see very little social media presence outside of YouTube and Google+.

For one, you have probably heard how important social media is to establishing a brand online and engaging internet users, but you might not know that Bing is often more attentive to social media than Google. While Google’s rankings may factor in social media data for website owners, actual users see very little social media presence outside of YouTube and Google+.

Usually Matt Cutts, esteemed Google engineer and head of Webspam, uses his regular videos to answer questions which can have a huge impact on a site’s visibility. He recently answered questions about using the Link Disavow Tool if you haven’t received a manual action, and he often delves into linking practices which Google views as spammy. But, earlier this week he took to YouTube to answer a simple question and give a small but unique tip webmasters might keep in mind in the future.

Usually Matt Cutts, esteemed Google engineer and head of Webspam, uses his regular videos to answer questions which can have a huge impact on a site’s visibility. He recently answered questions about using the Link Disavow Tool if you haven’t received a manual action, and he often delves into linking practices which Google views as spammy. But, earlier this week he took to YouTube to answer a simple question and give a small but unique tip webmasters might keep in mind in the future.