Now that the dust has settled after some extended debate, it seems clear that responsive design is here to stay. It won’t last forever, but it certainly isn’t a flashy trend that is going to fade away soon. It makes sense responsive design would catch on like it has, as it makes designing for the multitude of devices used to access the internet much easier than ever before.

Almost as many people accessing the internet right this moment are doing so using a smartphone or tablet, but they aren’t all using the same devices. A normal website designed to look great on a desktop won’t look good on a smartphone, but similarly a site designed to work well on the new iPhone won’t have the same results on a Galaxy Note 3.

This problem has two feasible solutions for designers. Either you can design multiple versions of a website, so that there is a workable option for smartphones, tablets, and desktops, or you can create a responsive website which will look good on every device. Both options require you to test your site on numerous devices to ensure it actually works great across the board, but a responsive site means you only have to actually design one site. The rest of the work is in the tweaking to optimize the site for individual devices.

That all explains why designers love responsive design as a solution for the greatly expanding internet browsing options, but we have to please other people with our designs as well. Thankfully, responsive design has benefits for everyone involved. The design solution is even great for search engine optimization, which is normally not the case with design and optimization working together. Saurabh Tyagi explains how responsive design benefits SEO as much as it does consumers.

Google Favors Responsive Sites

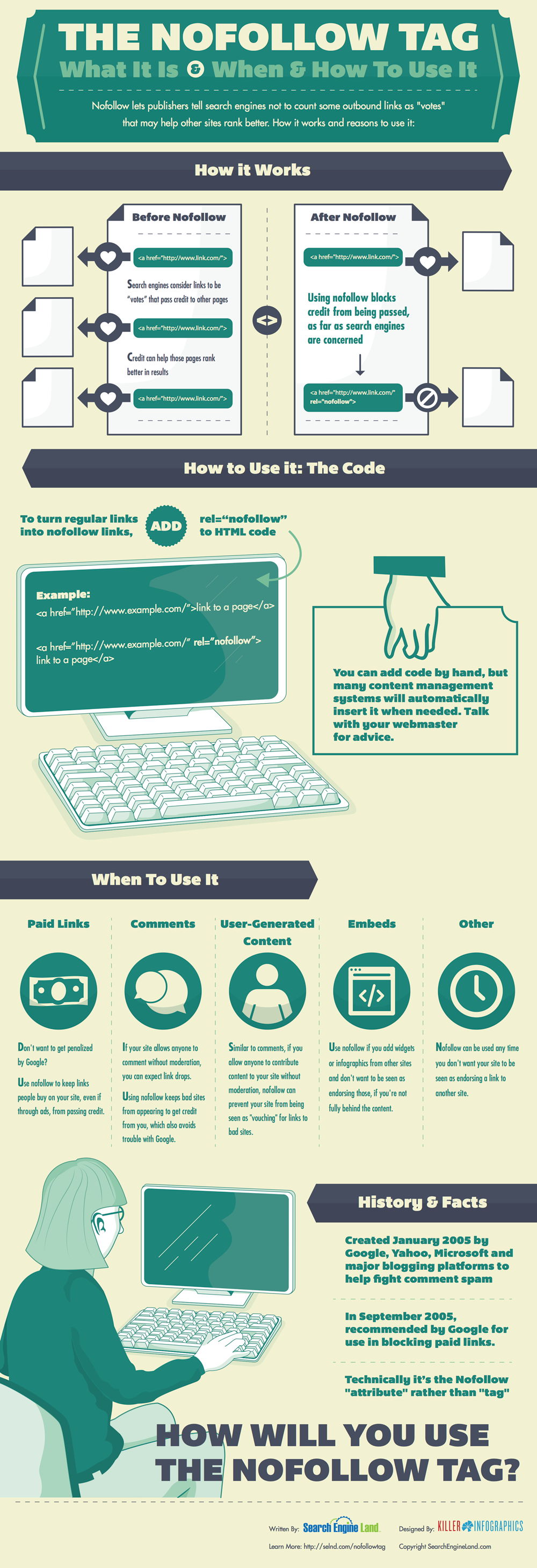

SEO professionals spend a lot of their time and efforts simply trying to appease the Google Gods, or trying to follow the current best practices while also managing to outplay their competition. Google has officially included responsive design into its best practice guidelines, as well as issuing public statements calling for websites to adopt the design strategy, so naturally SEOs have come to love it.

One of the biggest reasons Google loves responsive sites is that it allows websites to use the same URL for a mobile site as they do for a desktop site, instead of redirecting users. A site with separate URLs will have a harder time gaining in the rankings than one with a single functional URL.

Improves the Bounce Rate

Getting users to stay on your page is actually easier than you might think. If you represent yourself honestly to search engines, and offer a functional, readable, and generally enjoyable website, users that click on your page are likely to stay there. By ensuring your website is functional and enjoyable on nearly every device, you ensure users are less likely to hit the back button.

Save on SEO

Having a separate mobile site from your desktop site means double the SEO work. Optimization is neither cheap, fast, or easy, so it doesn’t make sense to waste all that extra time and work on basically duplicate efforts. Instead of having to optimize two sites, responsive websites allow SEOs to put all their efforts into one site, saving you money and providing a more focused optimization effort.

Avoids Duplicate Content

When you’re having to manage running two sites for the same business, it is highly likely you will eventually end up accidentally placing duplicate content on one of the sites. If this becomes a regular problem, you can expect punishments from search engines which could be easily avoidable by simply having one site. Responsive design also makes it easier to direct users to the right content. One of Google’s biggest mobile pet peeves of the moment is the practice of consistently redirecting mobile users to the front page of the mobile site, rather than to the mobile version of the content they asked for. Responsive design avoids these types of issues altogether.

There has been quite a bit of speculation ever since Matt Cutts publicly stated that Google wouldn’t be updating the PageRank meter in the Google Toolbar before the end of the year. PageRank has been assumed dead for a while, yet Google refuses to issue the death certificate by assuring us they currently have no plans to outright scrape the tool.

There has been quite a bit of speculation ever since Matt Cutts publicly stated that Google wouldn’t be updating the PageRank meter in the Google Toolbar before the end of the year. PageRank has been assumed dead for a while, yet Google refuses to issue the death certificate by assuring us they currently have no plans to outright scrape the tool.

A couple weeks ago, Google released an update directly aimed at the “industry” of websites which host mugshots, which many aptly called The Mugshot Algorithm. It was one of the more specific updates to search in recent history, but was basically meant to target sides aiming to extort money out of those who had committed a crime. Google purposefully targeted those sites who were ranking well for names and displayed arrest photos, names, and details.

A couple weeks ago, Google released an update directly aimed at the “industry” of websites which host mugshots, which many aptly called The Mugshot Algorithm. It was one of the more specific updates to search in recent history, but was basically meant to target sides aiming to extort money out of those who had committed a crime. Google purposefully targeted those sites who were ranking well for names and displayed arrest photos, names, and details. There have never been more opportunities for local businesses online than now. Search engines cater more and more to local markets as shoppers make more searches from smartphones to inform their purchases. But, in the more competitive markets that also means local marketing has become quite complicated.

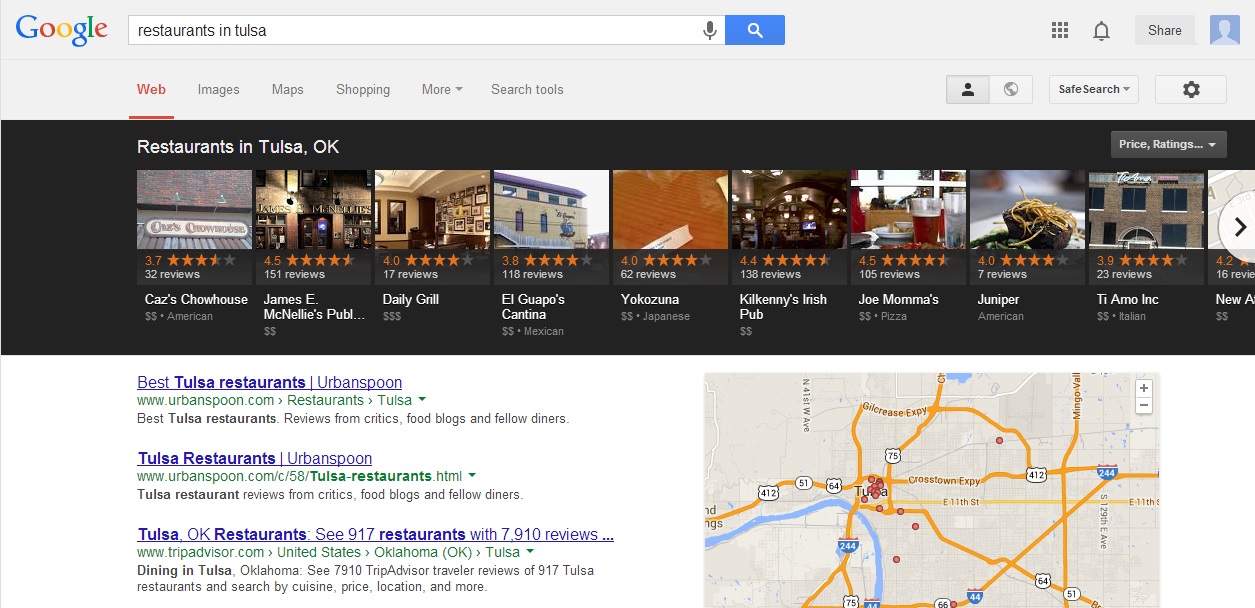

There have never been more opportunities for local businesses online than now. Search engines cater more and more to local markets as shoppers make more searches from smartphones to inform their purchases. But, in the more competitive markets that also means local marketing has become quite complicated. Google’s Carousel may seem new to most searchers, but it has actually been rolling out since June. That means enough time has past for marketing and search analysts to really start digging in to see what makes the carousel tick.

Google’s Carousel may seem new to most searchers, but it has actually been rolling out since June. That means enough time has past for marketing and search analysts to really start digging in to see what makes the carousel tick. Bing gave people more control over what shows up about them online last week when

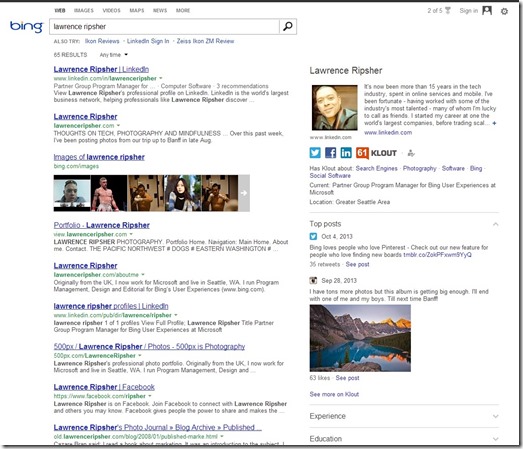

Bing gave people more control over what shows up about them online last week when