Newcomers to SEO can often feel intimidated by the complex, lingo-filled field of optimization. There are countless articles explaining seemingly complicated concepts, constantly changing practices, and constant warnings about the cost of making mistakes. It all makes SEO seem very high-risk and altogether frightening.

Newcomers to SEO can often feel intimidated by the complex, lingo-filled field of optimization. There are countless articles explaining seemingly complicated concepts, constantly changing practices, and constant warnings about the cost of making mistakes. It all makes SEO seem very high-risk and altogether frightening.

But, SEO doesn’t have to be that way. While you can dive straight into the rabbit hole and try to make sense of it all if you want, there are also many resources available to break it all down in simple terms, if you know where to find them. Pan Galactic Digital is one of those resources, as they regularly publish “SEO 101” articles trying to educate consumers, beginners, and anyone else interested.

SEO can seem unwieldy because it has to pay attention to the countless ranking signals that Google and Bing use to rank sites, but in all actuality, it all can be collected into two categories: on-page and off-page SEO.

On-Page SEO

On-page SEO is exactly what it sounds like. It is made off all the areas you can optimize directly on each individual page of your site, especially Content, Code, and Site Architecture.

You are most likely familiar with content, because it is what you interact with most often. Specifically, content is everything you read and all the images you see directly on the page. Are they high quality? Does the text inform and engage? Is the content using keywords you would want to rank in a search engine, but not overusing them so that it feels unnatural? High quality content offers value to the viewer.

You can also optimize some of the HTML code on your page around chosen keywords. Titles, Image Alt tags, and Headers can all be potentially optimized to help search engines understand what your site is and what it offers. However, just as with content, overstuffing these areas with keywords isn’t advised.

Lastly, you have your site architecture, or how your site is laid out through proper URL management and loading speed of webpages. You want to make sure search engines can easily access your site once they’ve found you. You will want your keywords to incorporate important keywords that are relevant to your content.

Off-Page SEO

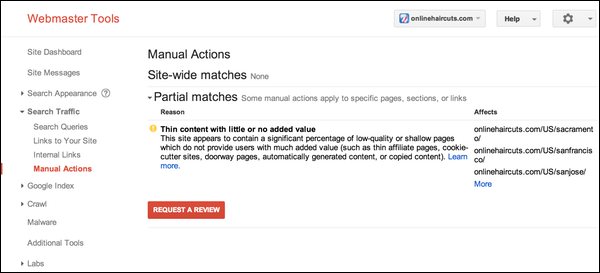

Off-page SEO is everything that happens outside your site, which can actually be fairly out of your control if done properly. The most well-talked about example is linking. Links to your site from other sites have historically been highly favored by Google as a sign of a quality site. They act roughly like votes in favor of your site. But, these have been partially demoted because numerous optimizers would attempt linking schemes such as buying links or syndicating content on other websites in inappropriate ways. Don’t ever buy links or try to take advantage of a loophole to get them. They must be earned.

One of the quickest growing off-page SEO signals is social media. It isn’t entirely clear how much social media presence directly affects your rankings compared to how much it just brings you traffic, but its no argument that well managed social media is a great tool for your website and business presence.

Obviously, you can dig deeper into these two categories and find far more ways that Google and Bing decides where to rank your webpages, but those basic factors are by far the most important. If you can get a handle on everything above, you will be well suited for anything else you encounter moving forward.