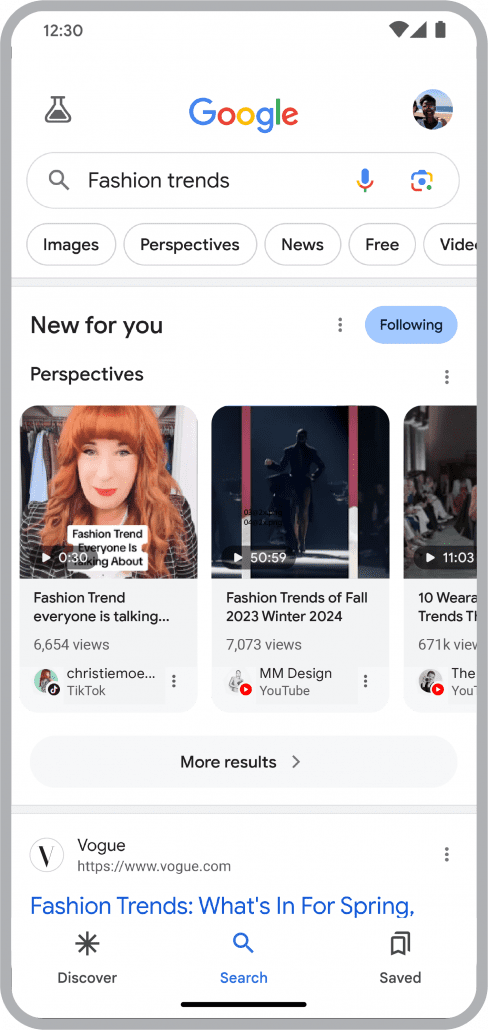

While a growing number of U.S. consumers are using TikTok for search, a new survey suggests the popular social network may not be as strong of a challenger to Google as previously believed.

A new survey from Adobe Express found that the number of people using TikTok for search has grown compared to a 2024 survey from the same company. However, the study found that fewer young users say they prefer TikTok to Google’s search engine.

The Study

The report comes from an Adobe Express survey published earlier this month and conducted in January 2026. It surveyed over 800 consumers and 200 small businesses in the U.S. about their search habits across various platforms including Google, TikTok, and ChatGPT.

It found that 49% of consumers report using TikTok as a search engine, an 8 point increase from 2024. However, the most notable findings were among Gen Z users.

Gen Z and TikTok as a Search Engine

While much has been made about the number of Gen Z users favoring TikTok over Google, the study shows that number is actually falling.

Among Gen Z users who were surveyed, those who said they were more likely to turn to TikTok for a search over Google fell from 8% in 2024 to 4% in 2026.

This isn’t to say Gen Z is using TikTok less for search, though. In fact, 65% of Gen Z users said they use TikTok as a search engine, and 25% said they found it effective for finding information. It’s just that they don’t necessarily prefer TikTok’s search tools over Google’s.

Instead, it seems that Gen Z is adopting a multiplatform approach to search – using the platform they feel is best or most convenient for specific searches.

ChatGPT Shows Growth as a Search Engine

While the number of people who prefer TikTok for search over Google fell, the survey suggests more users of every age group are turning to ChatGPT for search over Google.

According to the survey, 14% of users say they are more likely to use ChatGPT for search than Google. This was true even when broken down by age group, with 12% of Gen Z, 15% of millennials, 15% of Gen Z, and 14% of baby boomers.

What This Means

When a significant number of younger users started reporting using TikTok over Google, it caught the notice of many brands and marketers. However, it appears the situation isn’t as simple as “TikTok will be the next big search engine”. Instead, it appears that users are using a variety of search platforms, with ChatGPT quickly growing as a significant player in search.

It is unclear whether the sale of TikTok’s U.S. operations or changes to the platform contributed to the decrease in those who favor the platform.

For brands looking to supplement their search marketing in the face of falling organic search traffic from Google, the answer seems to be investing in multiple platforms and ensuring they are getting picked up by AI tools – especially ChatGPT. That said, it will still likely be quite some time before any single platform dethrones Google as the biggest search engine.

For more, read the full report from Adobe Express here.